Tuesday 16 June: confidence intervals

.

.

.

.

.

.

.

.

Yesterday we learned that a comparison between p-values is an invalid way to draw conclusions from statistical tests. Yet, many papers are published that use this trick. If all journal really forbid this trick, many reserach papers will become unpublishable, because they would not be able to draw any conclusions. Is there a way out of this problem?

Yes, there are several. The problem is combination of three things:

- not publishing null results

- p-values can never indicate that an effect is zero; this is a special case of not being able to say that an effect is small

- p-values can be too high

You can do something about all three problems.

How to publish null results

First get the paper accepted, then run the experiment.

How to conclude that an effect is small

Use confidence intervals and high numbers of participants.

How to lower your p-values

Use within-subject designs rather than between-subject designs. Use binomial models rather than gaussian models.

Confidence intervals

Here are the German-language proficiency scores of the Dutch and Flemish speakers again:

dutch = c(34, 45, 33, 54, 45, 23, 66, 35, 24, 50)

flemish = c(67, 50, 64, 43, 47, 50, 68, 56, 60, 39)

t.test (dutch, flemish, var.equal=TRUE)##

## Two Sample t-test

##

## data: dutch and flemish

## t = -2.512, df = 18, p-value = 0.02176

## alternative hypothesis: true difference in means is not equal to 0

## 95 percent confidence interval:

## -24.791 -2.209

## sample estimates:

## mean of x mean of y

## 40.9 54.4.

.

.

How to interpret this confidence interval?

- The confidence interval contains 95 percent of the data.

- When you run many experiments and compute a confidence interval according to the standard procedure for it, the confidence interval will contain the population mean in 95 percent of the cases.

- When you have run an experiment and have computed the confidence interval, there is a 95 probability that the population mean lies inside it.

- The confidence interval has a 95 percent probability of containing the population mean.

- A knowledgeable gambler can rationally bet 19 to 1 that the population mean is inside the confidence interval.

- The confidence interval is the collection of true population means for which the observed mean has a p-value of more than 5 percent.

- After we compute the confidence interval, we can be 95 percent confident that the true mean lies within it.

.

.

.

.

.

.

Option 1 is nonsense (it’s the definition in Harald Baayen’s book). Option 2 is the frequentist definition of the confidence interval. Option 3 is contradicted by frequentist statisticians as well as Fisher, who would say that the population mean is either inside the interval (with probability 1) or outside the interval (with probability 1); you just don’t know which of the two is the case. Option 4 is ambiguous: it is true if you interpret it as option 2, but not if you interpret it as option 3 (according to frequentists and Fisher); so it’s a bit sloppy. Option 5 is true (for normal, i.e. gaussian, distributions). Bayesian statisticians (see Thursday’s lecture) make a big point of it, and for this reason they would also accept option 3! Option 6 is true for normal distributions. So there’s a relationship between p-values and the confidence interval! Option 7 is ambiguous: it is true if you interpret it as option 5, but not for several other definitions of “confidence”.

Some of these options can be illustrated with simulations.

The confidence interval contains the true mean in 95% of the cases

Let’s check that the probability (oops) of finding a confidence interval that contains the population mean (or difference) is indeed around 95 percent, by drawing a hundred thousand random samples from the Dutch and Flemish populations. The procedure is parallel to what we did yesterday for the relation between the t-value and the p-value.

In the simulation, we assume a difference of 10 between the two population means:

- \(\mu_d = 45, \mu_f = 55\)

- the true standard deviations are the same (\(\sigma_d = \sigma_f = 8\) arbitrarily)

Here is again how you obtain the data for one experiment:

numberOfParticipantsPerGroup = 10

mu.d = 45

mu.f = 55

sigma.d = sigma.f = 8

data.d = rnorm (numberOfParticipantsPerGroup, mu.d, sigma.d)

data.f = rnorm (numberOfParticipantsPerGroup, mu.f, sigma.f)

data.d

data.f## [1] 42.36 24.81 42.49 44.07 51.96 50.18 62.61 30.93 48.21 58.44## [1] 70.05 57.96 56.01 61.38 53.58 54.23 62.08 45.58 70.41 43.89You get the confidence interval from such data as follows:

numberOfParticipantsPerGroup = 10

mu.d = 45

mu.f = 55

sigma.d = sigma.f = 8

data.d = rnorm (numberOfParticipantsPerGroup, mu.d, sigma.d)

data.f = rnorm (numberOfParticipantsPerGroup, mu.f, sigma.f)

conf.int = t.test (data.d, data.f, var.equal=TRUE) $ conf.int

lowerBound = conf.int [1]

upperBound = conf.int [2]

lowerBound

upperBound## [1] -22.01## [1] -0.1221This run does contain the true difference, which is -10.

Then try this a hundred thousand times, and see how often the true difference between the groups (-10) lies within the confidence interval:

numberOfParticipantsPerGroup = 10

numberOfExperiments = 1e5

count = 0

for (experiment in 1 : numberOfExperiments) {

mu.d = 45

mu.f = 55

sigma.d = sigma.f = 8

data.d = rnorm (numberOfParticipantsPerGroup, mu.d, sigma.d)

data.f = rnorm (numberOfParticipantsPerGroup, mu.f, sigma.f)

conf.int = t.test (data.d, data.f, var.equal=TRUE) $ conf.int

lowerBound = conf.int [1]

upperBound = conf.int [2]

trueDifference = mu.d - mu.f

if (trueDifference > lowerBound && trueDifference < upperBound) {

count = count + 1

}

}

count## [1] 94947This is indeed 95 percent. Apparently, R’s procedure for determining the confidence interval does a good job.

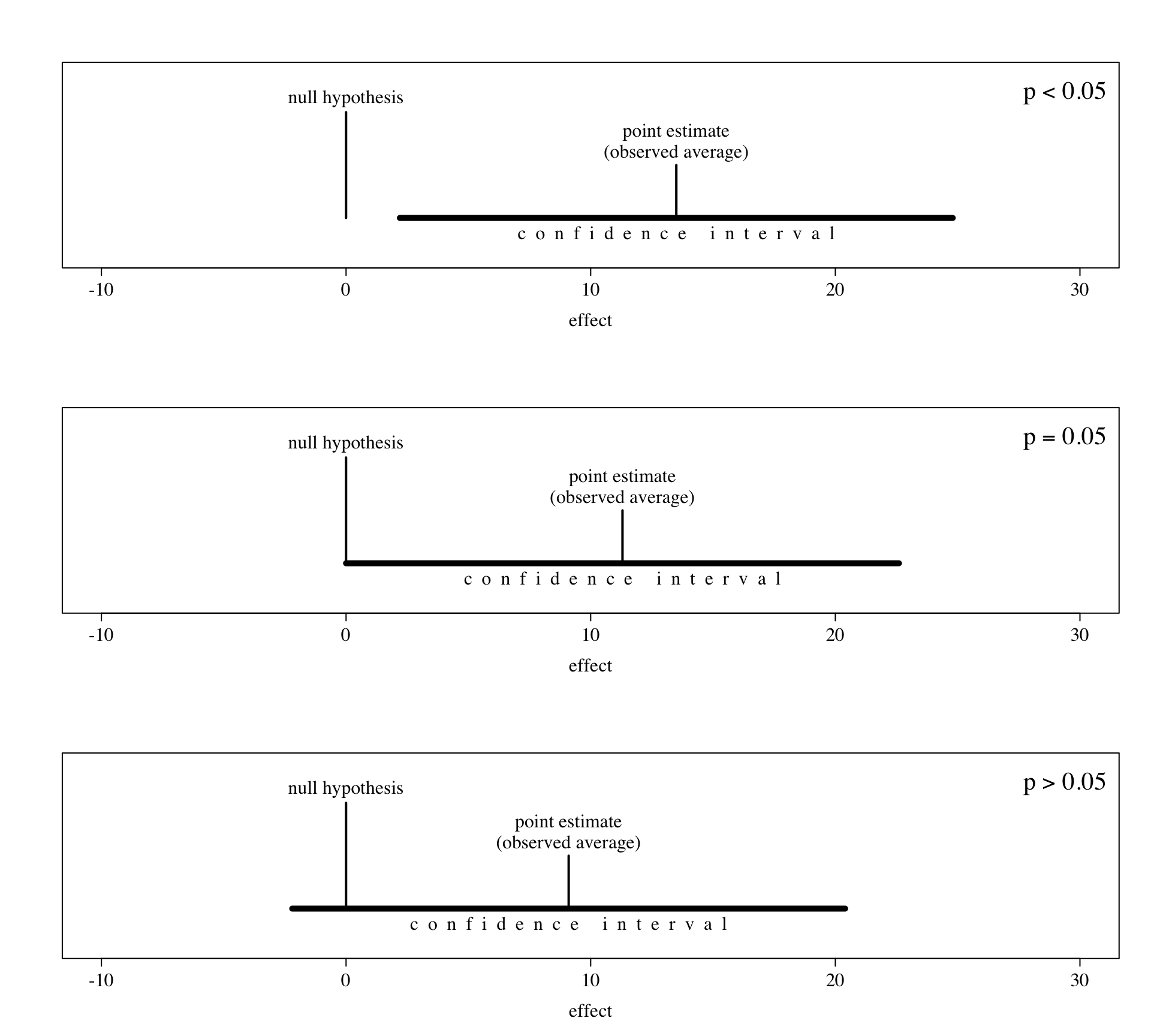

If and only if the p-value is above 5%, the confidence interval includes the null hypothesis

You can show this by running the Dutch–Flemish experiment multiple times:

numberOfParticipantsPerGroup = 10

mu.d = 45

mu.f = 55

sigma.d = sigma.f = 18

data.d = rnorm (numberOfParticipantsPerGroup, mu.d, sigma.d)

data.f = rnorm (numberOfParticipantsPerGroup, mu.f, sigma.f)

t.test (data.d, data.f, var.equal=TRUE)##

## Two Sample t-test

##

## data: data.d and data.f

## t = -2.602, df = 18, p-value = 0.01803

## alternative hypothesis: true difference in means is not equal to 0

## 95 percent confidence interval:

## -35.688 -3.802

## sample estimates:

## mean of x mean of y

## 32.90 52.64In this case, p is below 0.05, and the confidence interval does not contain 0.

numberOfParticipantsPerGroup = 10

mu.d = 45

mu.f = 55

sigma.d = sigma.f = 18

data.d = rnorm (numberOfParticipantsPerGroup, mu.d, sigma.d)

data.f = rnorm (numberOfParticipantsPerGroup, mu.f, sigma.f)

t.test (data.d, data.f, var.equal=TRUE)##

## Two Sample t-test

##

## data: data.d and data.f

## t = -1.608, df = 18, p-value = 0.1252

## alternative hypothesis: true difference in means is not equal to 0

## 95 percent confidence interval:

## -25.405 3.377

## sample estimates:

## mean of x mean of y

## 40.67 51.69In this case, p is above 0.05, and the confidence interval does contain 0. When you copy-paste this many times to your R console, you will see that this relation always holds. The following picture illustrates this (I’m showing the R code as well, in case you like to see how such pictures are created):

par (mfrow = c(3, 1), cex.axis = 1.5, cex.lab = 1.5, family = "serif")

fun = function (pointEstimate, legend) {

plot (NA, type = "n", xlab = "effect", ylab = "", yaxt = "n",

xlim = c(-10, 30), ylim = c(-1.8, 1.8))

legend ("topright", legend, bty = "n", cex = 2)

lines (c(0, 0), c(-1, 1), lwd = 2)

nullHypothesis = 0.0

lines (c(pointEstimate, pointEstimate), c(-1, 0), lwd = 2)

lines (c(pointEstimate-11.3, pointEstimate+11.3), c(-1, -1), lwd = 5)

text (x = pointEstimate, y = 0.4, pos = 3, labels = "point estimate", cex = 1.5)

text (x = pointEstimate, y = 0, pos = 3, labels = "(observed average)", cex = 1.5)

text (x = pointEstimate, y = -1, pos = 1, offset = 0.7,

labels = "c o n f i d e n c e i n t e r v a l", cex = 1.5)

text (x = nullHypothesis, y = 1, pos = 3, offset = 0.6,

labels = "null hypothesis", cex = 1.5)

}

fun (13.5, expression (p < 0.05))

fun (11.3, expression (p == 0.05))

fun ( 9.1, expression (p > 0.05))

From this we can conclude that if you give the confidence interval in your paper, the reader immediately knows whether the effect is significant: the effect is significant if the confidence interval does not include the null hypothesis. In other words, you can immediately see whether the effect is significant at the \(\alpha = 0.05\) level.

Neyman and Pearson must have loved the confidence interval, because it tells whether the effect is significant at the \(\alpha = 0.05\) level, and Fisher must have loved it as well, because one can immediately see whether the null hypothesis can be rejected and how big the effect could be.

.

.

.

Question: Does the computation of the confidence interval involve the null hypothesis anywhere?

- yes

- no

- don’t know

.

.

.

.

.

.

It doesn’t. That makes it quite different from p-value testing.

The size of the effect

The confidence interval cannot help solve the problem of not being able to prove the null hypothesis. With the confidence interval one still cannot say “Spanish listeners improve, whereas Portuguese listeners don’t improve”, or “Estonians and Latvians perform equally well on the German-language proficiency task.”

But the confidence interval can help solve the problem of not being able to say that an effect is small. It can allow us to say “the Spanish listeners improved a lot, whereas the Portuguese listeners improve little, if at all”, or “the difference between Estonians and Latvians on the German-language proficiency task is small or zero.” These formulations (“little, if at all” and “small or zero”) explicitly include the null hypothesis, but also include some non-null hypotheses, but not too many of them. You’re no longer reporting a null result!

.

.

.

Question under what circumstances can you expect the confidence interval, if it includes the null hypothesis, to be formulated in such a nice non-null-result manner?

- If the effect is really, really small.

- If you test really many participants.

- Don’t know.

.

.

.

.

.

.

Unfortunately, you may need lots and lots of participants. Even if the true effect is zero, the standard deviation between the participants may be so large that your confidence interval is still large.

Let’s do some simulations. Suppose the average Estonian and Latvian have an equal German-language proficience of 40.

numberOfParticipantsPerGroup = 10

mu.e = 40

mu.l = 40

sigma.e = sigma.l = 8

data.e = rnorm (numberOfParticipantsPerGroup, mu.e, sigma.e)

data.l = rnorm (numberOfParticipantsPerGroup, mu.l, sigma.l)

t.test (data.e, data.l, var.equal=TRUE)##

## Two Sample t-test

##

## data: data.e and data.l

## t = -1.01, df = 18, p-value = 0.326

## alternative hypothesis: true difference in means is not equal to 0

## 95 percent confidence interval:

## -12.082 4.238

## sample estimates:

## mean of x mean of y

## 38.91 42.83If you repeat this, then most of the time the absolute bound of the confidence interval reaches a value of 7 to 12. In the above sample, Estonians could be better than Latvians by 4.238, and Latvians could be better than Estonians by 12.082. Yesterday we had, a bit arbitrarily, called a difference of 4 “small”. If that is still our criterion today, then a potential difference of 12.082 cannot be called small. From this experiment we therefore cannot conclude with “confidence” that the difference between Estonians and Latvians is small.

This can be improved by testing a larger sample of participants, for example 1000:

numberOfParticipantsPerGroup = 1000

mu.e = 40

mu.l = 40

sigma.e = sigma.l = 8

data.e = rnorm (numberOfParticipantsPerGroup, mu.e, sigma.e)

data.l = rnorm (numberOfParticipantsPerGroup, mu.l, sigma.l)

t.test (data.e, data.l, var.equal=TRUE)##

## Two Sample t-test

##

## data: data.e and data.l

## t = -0.2698, df = 1998, p-value = 0.7873

## alternative hypothesis: true difference in means is not equal to 0

## 95 percent confidence interval:

## -0.7918 0.6003

## sample estimates:

## mean of x mean of y

## 39.51 39.61If you repeat this, then most of the time the absolute bound of the confidence interval reaches a value of only 0.7 to 1.2, i.e. 10 times smaller than with 10 participants per group. In the sample here, the absolute upper bound of the effect is 0.7918, which is smaller than 4, so we can conclude that the difference between Latvians and Estonians on this task is “small”.

The mathematics of this situation has been worked out long ago. It says two things:

- The width of the confidence interval tends to be inversely proportionate to the number of participants. To get a 10 times narrower interval, you need 100 times as many participants (this was the example just above), and to halve the width of the interval, you need 4 times as many participants.

- The width of the confidence interval is proportionate to the standard deviation within the groups. Above, this standard deviation was 8, but had it been 16, the widths of the intervals would have been doubled.

It is difficult to do something about the between-participant standard deviation if you don’t change the whole design (see tomorrow), so if you really want to be fine with any effect size, get many participants.

Practical advice

- Get many participants. If the effect exists and is not small, there’s a good chance you’ll detect it (p < 0.05).

- If p > 0.05, you haven’t detected the effect. However, you will be able to report a narrow confidence interval and therefore say that the effect is “small or zero”.

.

.

.

Other things you should not do to lower your p-value

Many tricks are in Simmons et al. (2011). Summary of advice: use the word “only” and don’t lie.